Music has always been an integral part of human culture. From ancient times to modern-day, music has evolved and transformed in various ways. With the advent of technology, music creation and discovery have taken a wild and increasingly influential new turn. Enter Generative AI – For those living under a rock, Artificial Intelligence (AI) is a technology that has revolutionized the way we do almost everything, including how we create and discover music.

@OpenAI #Jukebox (https://www.openai.com/research/jukebox)is a prime example of how AI is bridging the gap between music and future technology. #OpenAI-Jukebox “produces a wide range of music and singing styles, and generalizes to lyrics not seen during training. All the lyrics below have been co-written by a language model and OpenAI researchers” that can generate original songs and tracks in various genres and styles similar to creativity powerhouse known simply as DALL-E for image creation. It uses deep learning algorithms to analyze existing songs’ patterns and structures to create new ones.

So does the future of music creation and discovery lie in generative AI tools like OpenAI Jukebox or design and art creation in tools like DALL-E? Either way, it’s the season of all things #OpenAI. These days you can’t escape a chatGPT meme or SNL skit powered by the little chatterbox, providing endless possibilities and countless hours of entertainment for experimentation with different words, phrases, images, sounds, styles, and genres. And did I mention they can also help you to work smarter not harder with e-mail responses and documentation creation or even building apps for you ( ie – code scripting )?

Generative AI tools like OpenAI Jukebox are not only limited to creating new songs but also have the ability to remix existing ones. This opens up a whole new world of possibilities for artists who want to experiment with their work or collaborate with other musicians.

The use of generative AI tools in music creation also raises questions about copyright laws and ownership rights. As these tools become more advanced, it will be interesting to see how they impact traditional copyright laws.

As a former EDM DJ, Im excited to see where OpenAI takes its Jukebox research – it is just one example of how AI can revolutionize the world of music creation and discovery IMHO. As technology continues to evolve at this crazy rapid pace, it’s exciting to think about what other possibilities lie ahead for the world of AI and ( fill in here ). The future looks bright for humans and musicians and artists and fans alike as we march on this yellow brick journey towards what ? Emerald city or a more innovative musical landscape powered by artificial intelligence? Who knows ? Let’s ask ChatGPT!

Get the Bing app with the new AI-powered Bing now!:

https://bingapp.microsoft.com/bing?adjust=gfv2crx_717ll9t&style=sydney

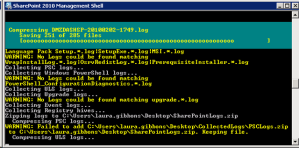

CICD (DevOps) for Data Science: Part Deux

It’s been some time since I presented Part 1 of this DevOps for Data Science short anthology. Since then I have been working on scripting out this solution for a series of presentations I am doing as part of a Discovery & Insights Roadshow.

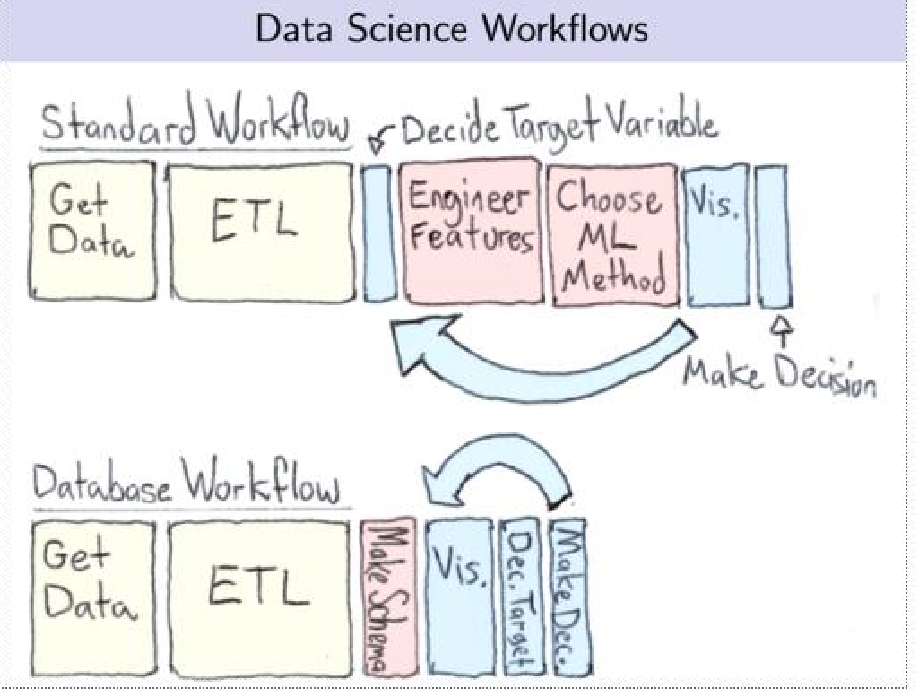

The example draws inference (pun intended) from anomaly detection and predictive maintenance scenarios since I seem spend a chunk of time working in this space. This is an implementation specifically of a Continuous Integration (CI) / Continuous Delivery (CD) pipeline in support of the applications that support those scenarios – Typically, when one is developing an AI application, two parallel motions take place:

a) A data science team is working on building out the machine learning (ML) models; often the handoff occurs when they publish out an endpoint that gets integrated into the app.

b) AppDev or developers work on the build of the application as well as are typically responsible for exposing it to end users via a web /mobile app or take the pre-trained model endpoint and call it from within an internal business process application.

The example use case for this Continuous Integration (CI)/Continuous Delivery (CD) pipeline is a fairly standard / typical Anomaly Detection and Predictive Maintenance machine learning scenario.

In short, the pipeline is designed to kick off for each new commit, run the test suite using model parameters required as input (features, eg) and tests the outputs generated (usually in the form of a score.py file) – if the test passes, the latest build branch is merged into the master which gets packaged into a Docker container and possibly Kubernetes if the operationalization or requirements demand cluster-scale support.

I have included a #Jupyter notebook for you to check out the process of deploying to @Docker and then productionalizing using @Kubernetes – Part of the latter productionalized code set includes the process for gathering data from how your users interact with your model. This is important from a retraining perspective because you need to have a closed loop architecture in order to enable said functionality.

Using Azure to Retrain Production Models Deployed Using Kubernetes

As a side note, Azure Databricks is a managed Spark offering on Azure and customers already use it for advanced analytics. It provides a collaborative Notebook based environment with CPU or GPU based compute cluster much like if not identical to the Jupyter Notebook icon above. Often, I will get asked about DevOps specifically for Databricks in addition to Azure ML so wanted to explictly reference the fact that you can use either or both.

In this section, you will find additional information on how to use Azure Machine Learning SDK with Azure Databricks. You can train a model using Spark MLlib and then deploy the model to ACI/AKS from within Azure Databricks just like the example above. In additionn, you can also use Automated ML capability (public preview) of Azure ML SDK with Azure Databricks or without. A natural cloud convergence between ML/AI service offerings is currently underway across all vendors. This enables things like:

- Customers who use Azure Databricks for advanced analytics can now use the same cluster to run experiments with or without automated machine learning.

- You can keep the data within the same cluster.

- You can leverage the local worker nodes with autoscale and auto termination capabilities.

- You can use multiple cores of your Azure Databricks cluster to perform simultenous training.

- You can further tune the model generated by automated machine learning if you chose to.

- Every run (including the best run) is available as a pipeline, which you can tune further if needed.

- The model trained using Azure Databricks can be registered in Azure ML SDK workspace and then deployed to Azure managed compute (ACI or AKS) using the Azure Machine learning SDK.

Explaining #Containers / #Kubernetes to a Child : How To Become a Storytelling Steward Using Gamification & Graphic Novels (Comics)

How To Become a Storytelling Steward Using Gamification & Graphic Novels (Comics)

If someone asked you to explain the benefits of #Containers / #Kubernetes as though you were speaking to a child, do it in under 2 minutes & guarantee said kiddo’s comprehension, could you do it?

I was tasked recently with doing the same thing by my COO using Machine Learning as the main talking point . To elucidate, I decided to pose the same question to several peers who graciously entertained this whim ‘o mine. While they provided technically correct explanations’, often, they parked their response somewhere between boredom-block and theoretical thoroughfare. Yawn!

What those very intelligent practitioners failed to remember was this was NOT the latest round of stump-the-chump; that the goal was to explain Machine Learning in a way that a child could grok without “adult-splaining” / “grownup-eze” or other explanatory methods – Add to this, the goal of keeping the child engaged for the full 2 minutes –> well, shoot, ++ to you, dear reader turned supreme storytelling savant if you made that happen. While we are at it, why not add the ability to explain ML while avoiding the dreaded “eye gloss over” affect that most listeners dawn when tuning out their brain. This ‘Charlie Brown’ <whoah whoah whoah> adult vernacular riposte is nearly always reflected back to the speaker via those truth telling eyes of yours, enabling the Edgar Allen Poe in us all. Huh? What I mean is the tell-tale Pavlovian heart response to any “Data Science-based” summarization, in my experience.

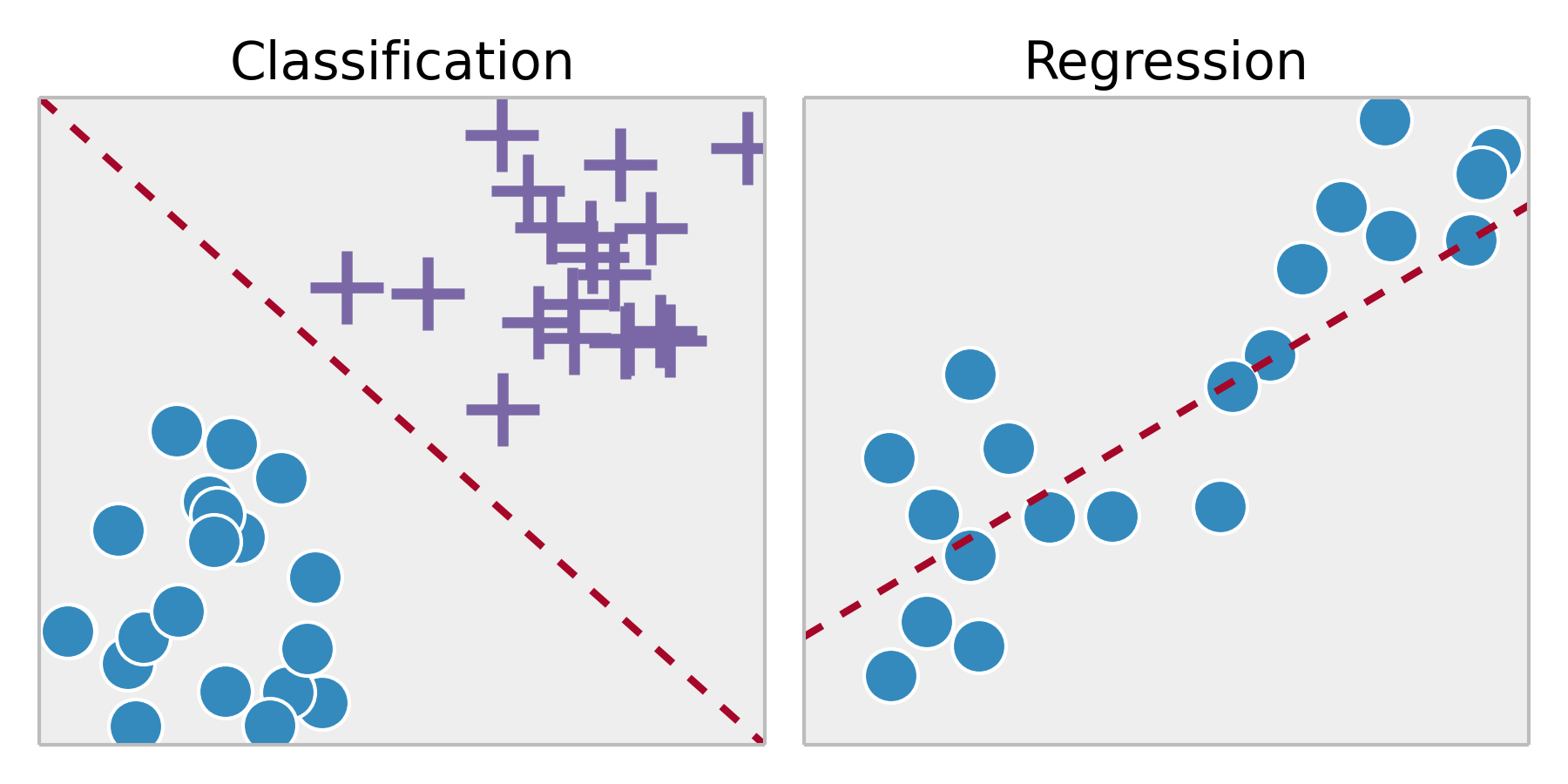

Instead, I described two scenarios involving exotic fruit –> the 1st, included the name for each of those curious fruits aka labels which was the basis for her being able to label them on demand accordingly. The 2nd scenario, also involved exotic fruits BUT the difference was that she was NOT provided any names ahead of time yet still was tasked with naming said items – And for those data scientists reading this, naturally, were metaphors for supervised and unsupervised learning.

Originally, I had prepared a similar talk for a ML centric presentation I was set to give to a community based data science event (later shared on this blog) – It contains about 90% comics / image iconography instead of laborious text per slide & was received incredibly well. In fact, when delivered to a 200+ audience, it was met with much applause and higher than normal attendee survey satisfaction scores. Simplicity & pacing; Remember, an image speaks 1000 words and to a child who often learns experientially / visually, well, it becomes the storytellers handbook to the hive mind of children everywhere :).

By the way, you can stop me next time when I diverge so sharply from the path.

But now we are back –> putting to bed my thorough digression & pulling you back to my 1st sentence above:

If someone asked you to explain the benefits of #Containers / #Kubernetes as though you were speaking to a child, do it in under 2 minutes & guarantee said kiddo’s comprehension, could you do it?

This awesome comic/bedtime story is one way to answer with a resounding YES ! Meet Phippy & Zee and follow their adventures as they head off to the Zoo: Phippy Goes To The Zoo_A_Kubernetes_Story: https://azure.microsoft.com/en-us/resources/phippy-goes-to-the-zoo/en-us/

DevOps for Data Science – Part 1: ML Containers Becoming an Ops Friendly Citizen

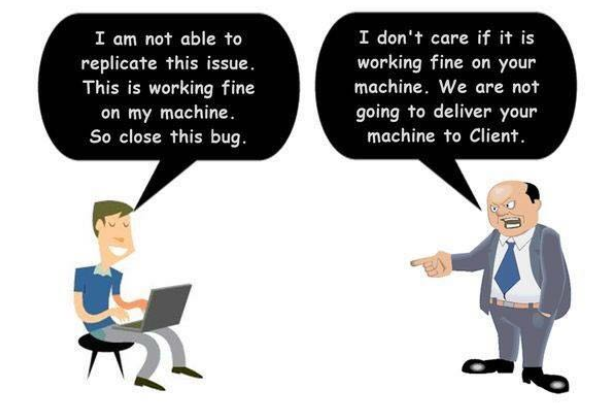

So, for the longest time, I was in the typical Data Scientist mind space (or at least, what I personally thought was ‘typical’) when it came to CI/CD for my data science project implementations –> DevOps was for engineering / app dev projects; or lift and shift/Infrastructure projects. Not for the work I did – right? Dev vs. IT (DevOps) seemed to battle on while we Data Scientists quietly pursued or end results outside of this traditional argument:

At the same time, the rise & subsequent domination of K8 (Kubernetes) and Docker et al <containerization> was the 1st introduction I had to DevOps for Data Science. And, in the beginning, I didn’t get it, if I am being honest. Frankly, I didn’t really get the allure of containers <that was my ignorance>. When most data scientists start working, they realize that the majority of data science work involve getting data into the format needed for the model to use. Even beyond that, the model being developed will need to be operationalized as part of some type of web/mobile/custom application for the end user.

Now most of us data scientists have the minimum required / viable processes to handle things like versioning / source control et al. Most of us have our model versions controlled on Git. But is that enough?

It was during an Image Recognition workshop that I was running for a customer that required several specific image pre-processing & deep learning libraries in order to effectively script out an end to end / complete image recognition + object detection solution – In the end, it was scripted using Keras on Tensorflow (on Azure) using the CoCo Stuff 2018 dataset + YOLO real-time object detection that I augmented with additional images/labels specific to my use case & industry (aka ‘Active Learning’):

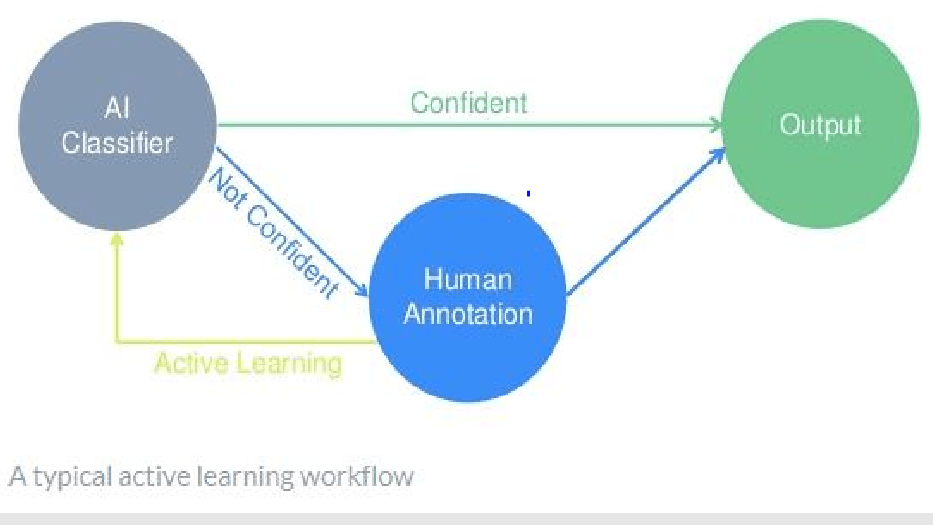

- Active Learning Workflow

Active Learning is an example of semi-supervised learning in which an algorithm interactively asks for more labeled data in order to affect model performance positively.

Labeling is often rushed because it doesn’t carry the cache of other steps in the typical data science workflow – And getting the data preprocessed (in this case , Images and Labels) is a necessary evil if you want to achieve better model performance in terms of accuracy, precision, recall, F1 – whichever, given a specific algorithm in play & its associated model evaluation metric(s) :

And it was during the setup/installation of these libraries when it occurred to me so clearly the benefit of Data Science containerization – If you have always scripted locally or on a VM, you will understand the pain of maintaining library / package versions whether using python or R or Julia or whatever the language du jour you use to script your model parameters / methods etc.

And when version conflicts come into play, you know how much time gets wasted searching / Googling / Stack Overflowing a solution for a resolution (ooh, those version dependency error messages are my FAVE <not really, sigh…but I digress>…

Even when you use Anaconda or miniconda “conda” for environment management, you are cooking with gas until you are not: like when your project requirements demand you pip/conda install very rare/specific libraries/packages that have other pkg version dependencies / prerequisites only to hit an error during the last package install step advising that some other upstream pkg version that is required is incorrect / outdated thus causing your whole install to roll back. Fun times <and this is why Cloud infrastructure experts exist>; but it takes away from what Data Scientists are chartered with doing when working on a ML/DL project. <Sad but true: Most Data Scientists will understand / commiserate what I am describing as a necessary evil in today’s day and age.>

OK and now we are back: Enter containers – how simple is it to have a Dockerfile (for example) which contains all the commands a user could call via the CLI to assemble an image including all of the packages/libraries and their dependencies by version for a set python kernel <2 or 3> and version (2.6, 2.7, 3.4, 3.5, 3.6 etc ) for this specific project that I described above? Technically speaking, Docker can build images automatically by reading the instructions from this Dockerfile. Further, using docker, build users can create an automated build that executes several command-line instructions in succession. –> Right there, DevOps comes clearly into picture where the benefits of environment management (for starters) and the subsequent time savings / headache avoidance becomes greater than the learning curve for this potentially new concept.

There are some other points to note to make this happen in the real world: Something like VSTS would need to be wrapped into a Docker Image, which would then be put on a Docker container registry on a cloud provider like Azure. Once on the registry, it would be orchestrated using Kubernetes.

Right about now, your mind is wanting to completely shut down. Most data scientists know how to provide a CSV file with predictions / or a scoring web service centered on image recognition/ classification handed off to a member of your AppDev team to integrate / code into an existing app.

However, what about versioning / controlling the model version ? Each time you hyper tune parameters within a model you are potentially changing the model performance – How do you know which set of ‘tunes’ resulted in the highest evaluation post scoring? I think about this all of the time because even if you save your changes in distinct notebooks (using JupyterHub et al), you have to be very prescriptive on your naming conventions to reflect the changes made to compare side by side across all changes during each tuning session you conduct.

This doesn’t even take into account once you pick the best performing model, actually implementing version control for the model that has been operationalized in production and the subsequent code changes required to consume it via some business app/process. How does the typical end user interact with the operationalized scoring system once introduced to them via the app? How will it scale!? All this would involve confidence testing, checking against a set threshold, and triggering some type of closed loop action system when anomalies are detected. Plus, how do you get sign off from different parties and orchestration between different cloud & on-premise servers that support the business process (with all the corporate firewall / networking / data movement / storage / encryption requirements & rules)? Maybe you have others to think about this – But if you want to be a data scientist worth having the overly used moniker applied to your role, you should care enough to learn about DevOps and how you can be a better corporate citizen & not just the Rockstar Data Scientist who alienates everyone to get to the root cause. IMHO:

This should be part of your Data Scientist process. Period. Hard stop. Not only for you, but for others that come after you or are on your team. No need to reinvent the wheel-Plus, for organizations that have strict CI/CD / DevOps procedures and limited Ops staff, the automation that you can bring with your project deliverables will win you favor, at a minimum, for considering this vital aspect to all other appDev type projects / roles in your company.

Integrating Databricks with Azure DW, Cosmos DB & Azure SQL (part 1 of 2)

I tweeted a data flow earlier today that walks through an end-to-end ML scenario using the new Databricks on Azure service (currently in preview). It also includes the orchestration pattern for ETL (populating tables, transforming data, loading into Azure DW etc), as well as the SparkML model creation stored on CosmosDB along with the recommendations output. Here is a refresher:

Some n uances that are really helpful to understand: Reading data in as CSV but writing results as parquet. This parquet file is then the input for populating a SQL DB table as well as the normalized DIM table in SQL DW both by the same name.

uances that are really helpful to understand: Reading data in as CSV but writing results as parquet. This parquet file is then the input for populating a SQL DB table as well as the normalized DIM table in SQL DW both by the same name.

Selecting the latest Databricks on Azure version (4.0 version as of 2/10/18).

Using #ADLS (DataLake Storage , my pref) &/or blob.

Azure #ADFv2 (Data Factory v2) makes it incredibly easy to orchestrate the data movement from 3rd party clouds like S3 or on-premise data sources in a hybrid scenario to Azure with the scheduling / tumbling one needs for effective data pipelines in the cloud.

I love how easy it is to connect BI tools as well. Power BI Desktop can connect to any ODBC data source and specifically to your Databricks clusters by using the Databricks ODBC driver. Power BI Service is a fully managed web application running in Azure. As of November 2017, it only supports Spark running on HDInsight. However, you can create a report using Power BI Desktop and upload it to an Azure service.

The next post will cover using @databricks on @Azure with #Event Hubs !

Learning From the Pang of Quantitative Defeat

…@NFLFantasy #PPR Matchup failure, that is.

Let me preface this by saying that immediately after drafting my team, a manic flurry of clicks & non-favorable sighs – I measured my lineup for week 0 & beyond in terms of likelihood to win the playoffs. And I came in last (discussed in a previous post).

My win/loss season ratio prediction ala @NFLFantasy was terrible (regular season = 6wins:8losses. (Ouch)…Luckily, I troll the waiver wire and pluck unknowns before they become titans (Kareem Hunt, Alvin Kamara to name a few from this season), or slot in weekly bosses before the mainstream agree they should be added to some Deep Sleeper Waiver Report. It is what has propelled me since Year 1. It is what I am most proud about in terms of my algorithms fantasy predictions. And emotion aside, statistically, after 4 years of training, I can attest to it’s success in terms of accurate machine predictability season after season, week after week.

Because of this trolling, I am now projected to be #1 again in the League with either a 10:4 or 9:5 ratio.

Why that is important is I lost 4 games in a row and forgot this little fact above. I mean I was undefeated for the first 5 weeks. So, having 4 straight back to back losses hurt and I went underground to lick my wounds. Until I was reminded that I was always going to have a minimum of 4 losses . And even if they were back-to-back, perhaps. they are now out of the way (unless the 9:5 ratio comes true). In either case, I am slotted to take back my #1 league bragging rites as we move into playoffs.

So, what I learned from my momentary lapse of positive model juju is that when I ignore the facts and outcomes of my model, no one wins. I embrace those losses because they were always part of my 2017 Fantasy Football predestination.

Week 4 – @NFLFantasy PPR Play/Bench Using #MachineLearning

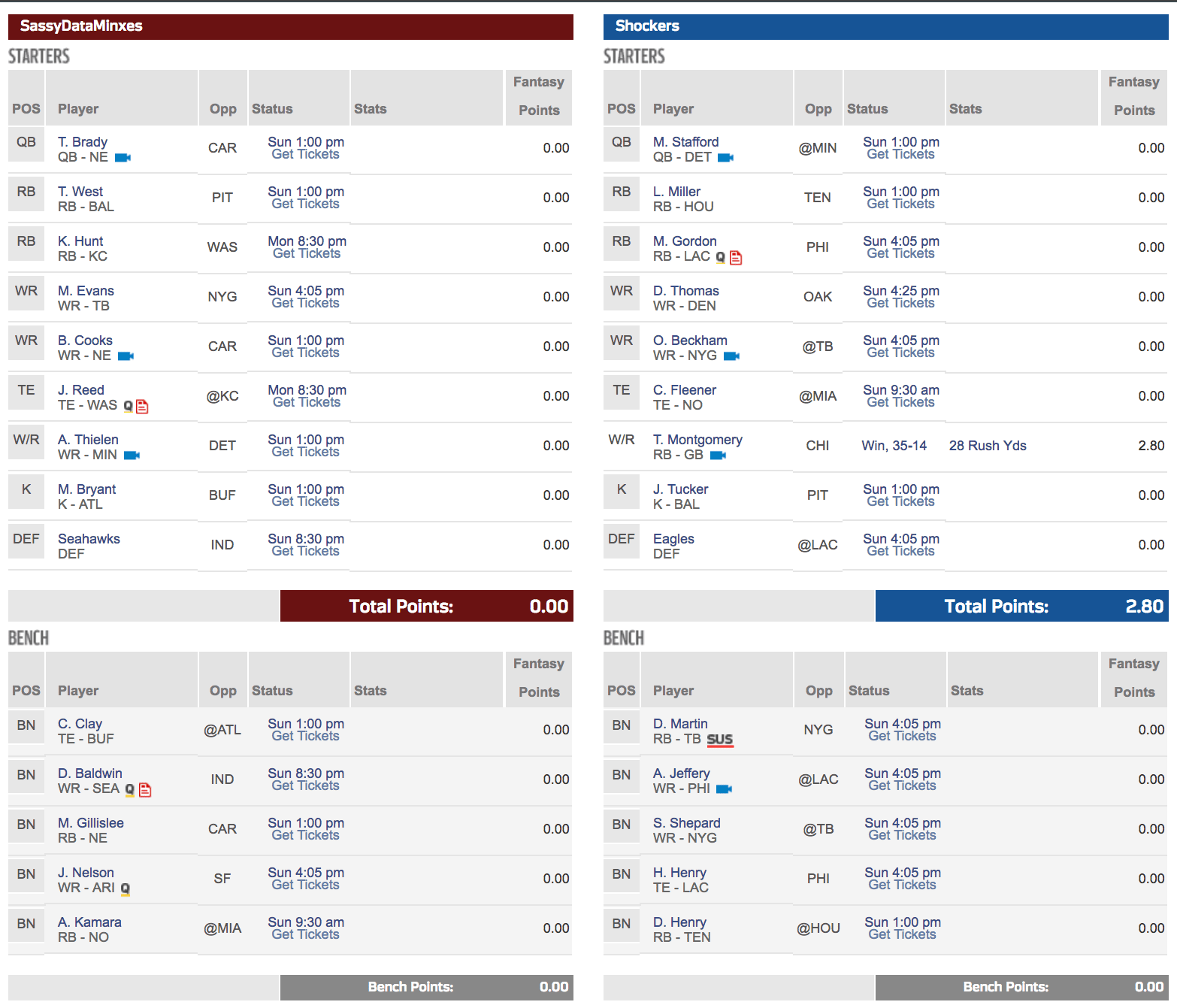

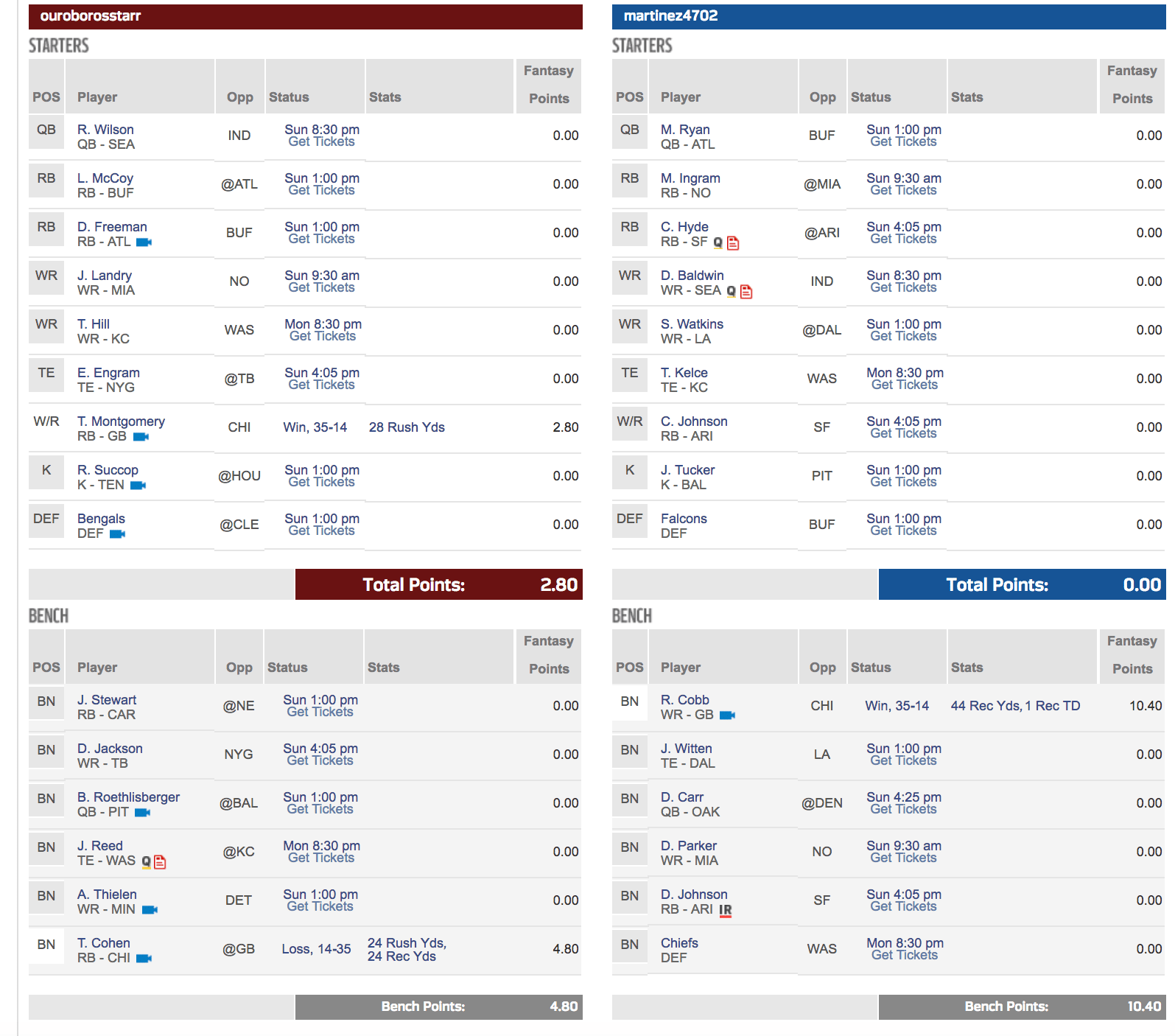

Week 4 – While it is 5am on Sunday and gameplay kicks off at 9:30am today (love those London games), I am technically getting this posted before the start. Ok, well, I missed Thursday – fair enough. But we are getting there:

League 1 (PPR):

As I mentioned in my Week 3 post, there are some things that even the best algorithmic usage cannot predict (today); injuries are one of those things that today are sort of hard to determine unless player has a propensity or an existing / underlying issue that one is tracking – Very soon, I believe that the development of the RFID program that each player’s’ uniforms now carry will yield better and better data points that can, in fact, become the Minority Report of the NFL season and predict the next great injury, based on biometric + performance + known medical history with a fair degree of certainty. But we are not there yet (at least, what is being provided to those of us NOT connected to the team’s/NFL <– a girl can dream about this kind of data coming from this type of connection, can’t she?)

As I mentioned in my Week 3 post, there are some things that even the best algorithmic usage cannot predict (today); injuries are one of those things that today are sort of hard to determine unless player has a propensity or an existing / underlying issue that one is tracking – Very soon, I believe that the development of the RFID program that each player’s’ uniforms now carry will yield better and better data points that can, in fact, become the Minority Report of the NFL season and predict the next great injury, based on biometric + performance + known medical history with a fair degree of certainty. But we are not there yet (at least, what is being provided to those of us NOT connected to the team’s/NFL <– a girl can dream about this kind of data coming from this type of connection, can’t she?)

You’ll notice I have Jordan Reed in my line up – this is a hard one. He is expected to play but at what level? I picked up Charles Clay as my backup but unfortunately , Reed doesn’t play until Monday; Clay plays at 1pm today. So, I will refresh my model and then see if Reed drops any in the ranking. If nothing else, it will give me a better picture on points earned potential IF he were to play with his existing injury (albeit healed or otherwise).

I swapped out Doug Baldwin who is also questionable this week in terms of his health; and slotted Adam Thielen in. I also picked up Alvin Kamara off of the waiver wire. I have a feeling about him; as does my model. This week, he ranks right next to Terrance West given the matchup and alternates on my bench (oh woe is my RB situation) – Thank goodness for a stout WR lineup, even with injuries et al.

All in all, I am most worried about this matchup. This might be a week for a loss, even if numerically, it looks like a win on NFL.com. I guess I can thank Ty M. for making it a possibility. But O’dell B Jr. might have something else to say about that. Oh that and Melvin Gordon questionable status: if only it were so…Again, I love to dream…

League 2 (Standard): What made me thankful in League 1, has plagued me in League 2 (oh, Ty!) – In this league, I have already pulled out Jordan Reed because E. Engram ranked higher in my weekly ranking anyway. I am also taking a chance on Jarvis Landry this week (it is New Orleans after all. The point differential +/- standard deviation for Standard format sent Landry into the top 10 for the week – fingers crossed xx – #trustthemodel).

Oh yea, this is also the league where I have incurred 1 loss so far – 2 wins / 1 loss. 😦 And that loss was to none other than my man; we are competing in both League 1 and League 2; he beat me by 1.9 points Week 1 – somehow I don’t remember him feeling as bad for me with my loss that week as I did for his loss against me in Week 3…

In fact, I think he did a victory dance perhaps akin to Tom Cruise in ‘Risky Business’ – but maybe that is too much information for a Sports ML blog posting :0):

Week4-Standard-@NFLFantasy-@NFL-@Laura_E_Edell

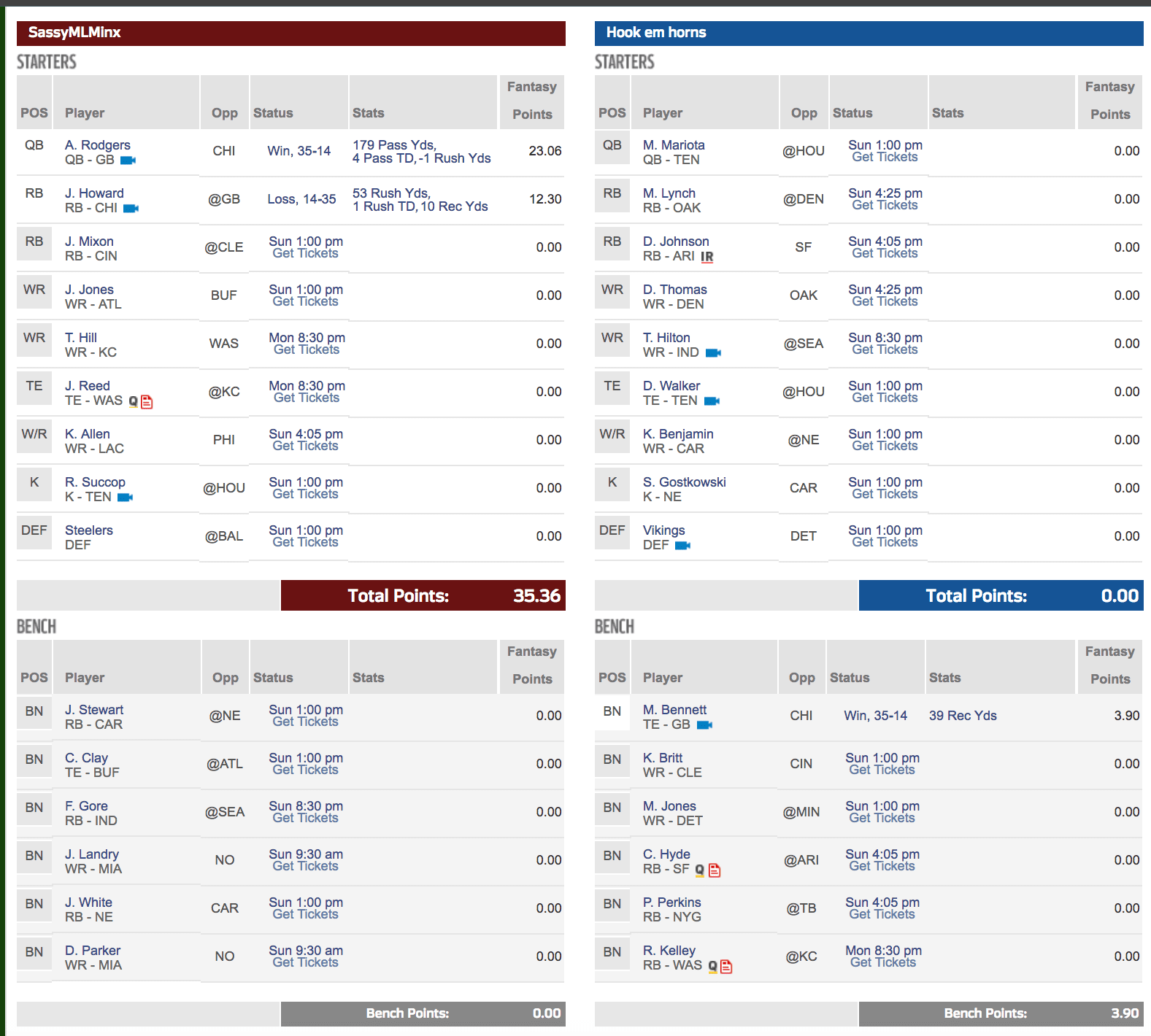

League 3 (Standard): After Thursday night, I am doing ok – I predicted 12.5 +/- .75 st dev for Jordan Howard and he earned 12.30. So, pretty spot on. Aaron Rodgers came in at 23.06, which I predicted 23.60 (what, is that my dyslexia at play; no, there was also a + / – 1.5 st dev at work, so again, spot on in terms of accuracy. So, maybe, my 3 wins and 0 losses will become 4 wins after this week. But I am not counting any chickens ever before they are hatched. Just look at what happened to my opponent / my man in Week 3 (154 points for me; 45 points for him in PPR format- that is what we call, just brutal people).

Week4-Standard-League3-@NFL-@NFLFantasy-@Laura_E_Edell

Week 3 – @NFLFantasy PPR Play/Bench Using #MachineLearning

Recap from Week 3 (sorry – I really am trying to post before Thursday night but it seems that between work right now and updating my model stats mid-week, I just run out of time).

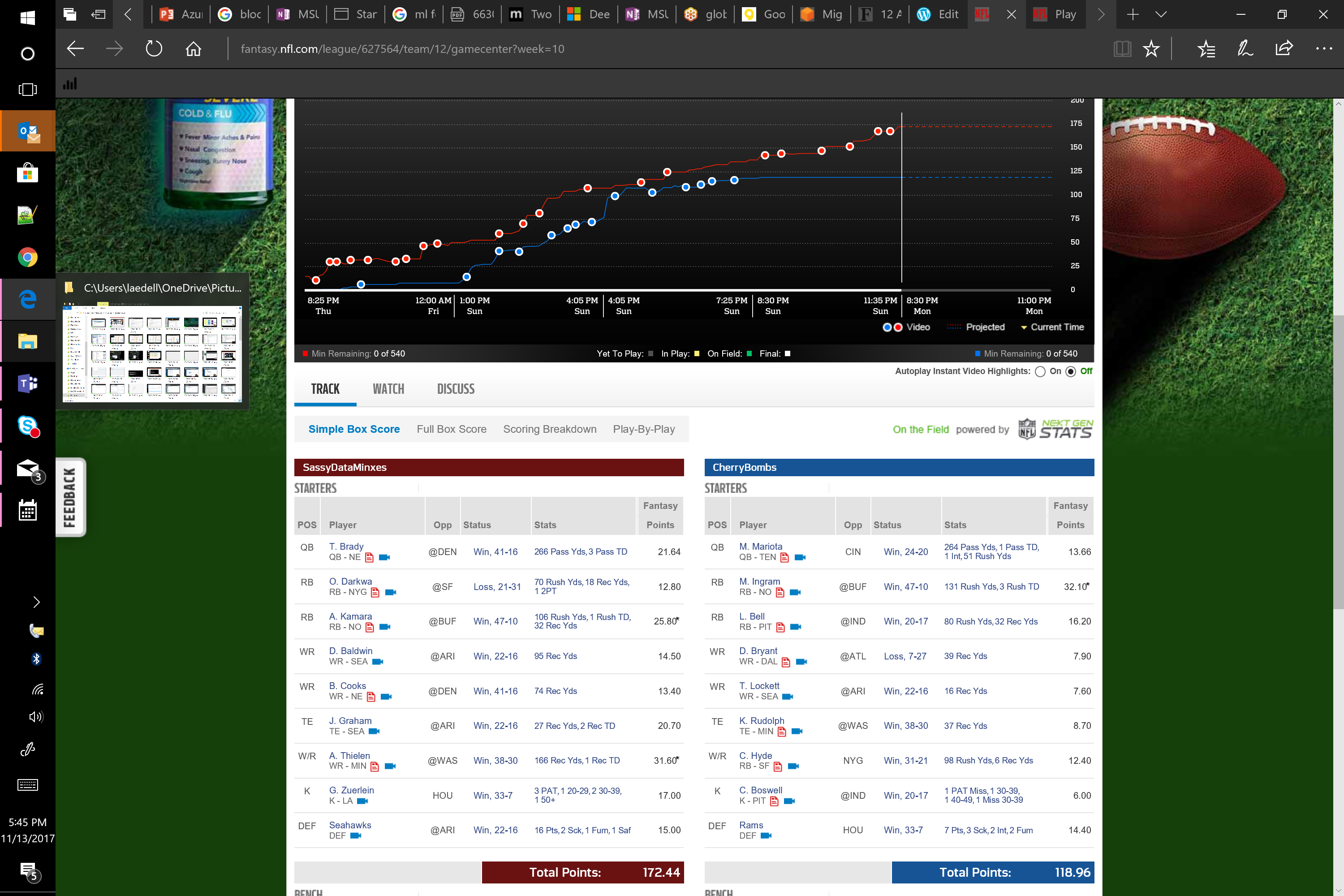

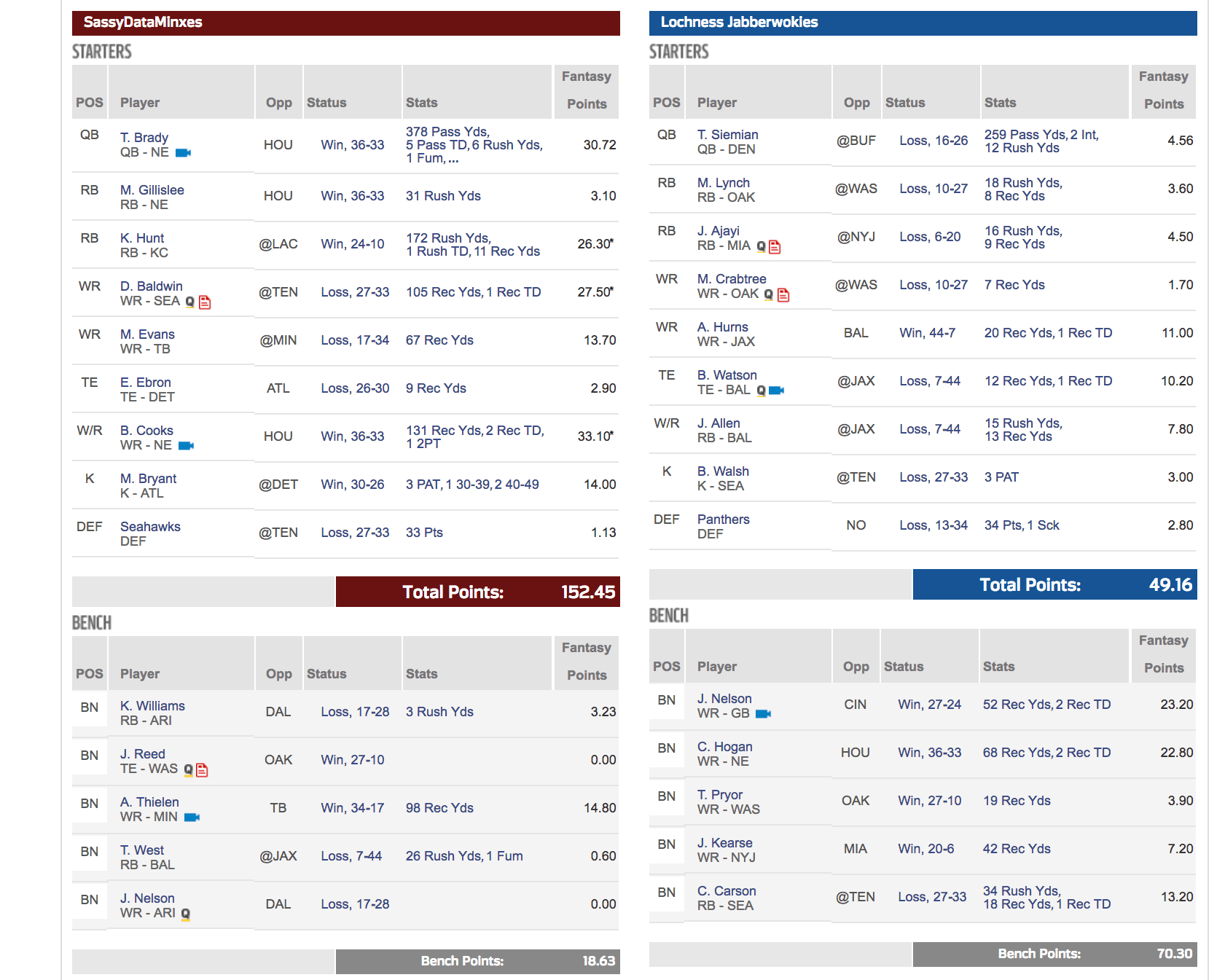

Week 3 was wildly successful. NFL.com was closer this time in terms of predicting my win over my opponent but nowhere near to the results that I achieved. I will always stand by Russell Wilson – what kind of Seahawk would I be if I threw in the towel and in my 2nd league (Standard format), he did not fail! He was simply divine. But alas, he is not my primary league QB (Tom Brady is – a hard pill to swallow personally being a die hard Seahawks fan after what happened in a certain very important yesteryear game – but he has proven his PPR fantasy value in Week 3). Primary League Week 3 – Wins = 3 / Losses = 0 (remember, after draft day, I was projected to end the season with an 8-8 W/L ratio. So, this might be the week; maybe not).

But last week, I genuinely felt bad – Locheness Jabberwokies, my week 3 opponent, happens to also be my man. And, this annihilation just felt like a win that went one step over the line of fairness. I mean a win’s a win – but this kind of decimation belongs outside of one’s relationship. Trust me. But he was a good sport. Except, he will no longer listen to my neurotic banter about losing in any given week, even if all signs point to a loss. Somehow, when I trust my model, it all works out. Now, I can’t predict injuries mid game like what happened in Week 4 to Ty Montgomery (my League 3 Flex position player). Standard league wise, he brought home 2.3 points ~ projected to earn about 10.70 Standard points with a st. deviation of +/- 1.5. But this was my lineup for Week 3 across my 3 leagues:

League #1 (Primary PPR) – remember, I aim to not just win but also optimize my lineup.

A bench full of points is a fail to me. But in this case, I benched Jordan Reed and picked up whomever was the next available TE off the waiver wire (granted he definitely contributed nothing). But out of my WR1 and WR2 + WR Flex, those I played were the best options (even though Mike Evans came in about 1.10 points less than Adam Thielen (bench), it was within the expected standard deviation, so either one would have been fine if played).

My RB situation has always been the bane of my league this year starting with my draft choices – Nothing to write home about except seeing the early value of Kareem Hunt (TG), even when NFL.com continued to project very little in his court.

Terrance West was supposed to be double digits but my model said to bench him vs. either Mike Gillislee or Kerwynn Williams. Both scored very little and essentially were within their own standard deviation negating their slight point difference.

All in all, players played worked out well and yes, though many stellar performances carried those that failed might be outliers in some regard (or at least they won’t bring home that many points week over week). But the PPR space is my golden circle of happiness – after all, I built my original algorithm using PPR league play / bench + historical point spreads + my secret sauce nearly 5 years ago; and those years of learning have “taught” the model (and me) many nuances otherwise missed by others in the sports ML space (though I respect greatly what my fellow ML “sportstaticians” put forth, my approach is very different from what I glean from others’ work).

One day, I would love to have a league with only ML Sports folks; the great battle of the algorithmic approaches – if you are interested, let me know in the comments.

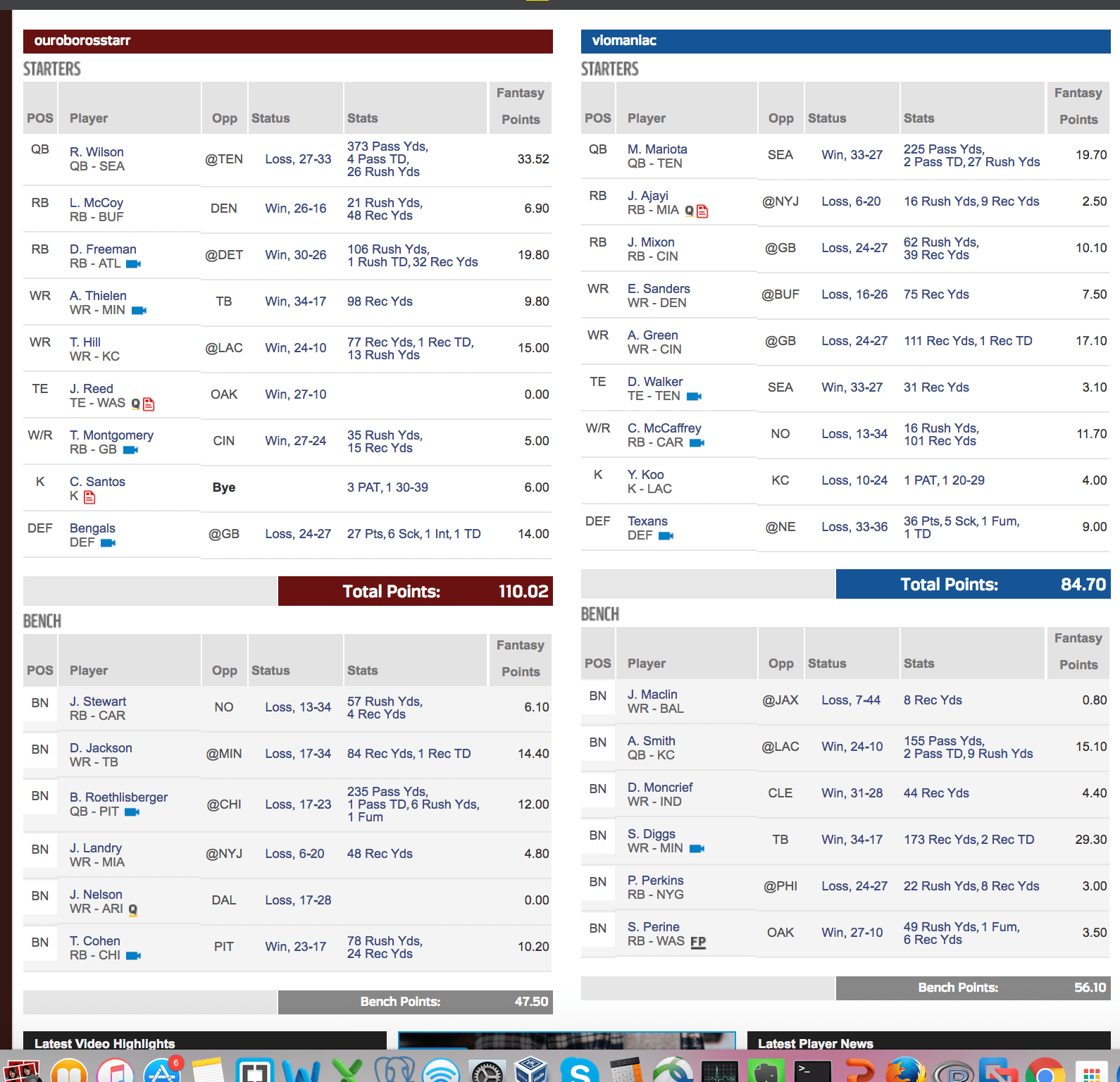

League 2 (Standard): Wins = 3/Losses = 0:

As you can see, I should have played DeSean Jackson over Adam Thielen or my Flex position Ty Montgomery. And geez, I totally spaced on pulling Jordan Reed like I did in League 1. This win was largely because of Russell Wilson, as mentioned before, Devonta Freeman and the Defense waiver wire pick up of the Bengals who Im glad I picked up in time for the game. oh yeah, I am not sure why Cairo Santos shows as BYE but earned me 6 points??? NFL.com has some weird stuff happening around 12:30 last Sunday ; games showed as in play (even though kick off wasn’t for another 30 minutes); and those that showed in play erroneously allowed players to be added from the wire still as though the games weren’t kicked off. Anyway, not as proud but still another win – Year 1 for Standard; perhaps after another 5 years training Standard like my PPR league, I will have more predictable outcomes , other than luck.

#NFL.com, #Week3, #Standard,@NFLFantasy

Week 1.P2 @NFLFantasy PPR Play/Bench Using #MachineLearning

OK, so going into Monday night, I am doing pretty well. Remember, going into Sunday, I was predicted to lose by 25+ points according to @NFLFantasy (NFL.com). But of course, they course correct all throughout gameday on Sunday and now I am projected to win (not sure if anyone would need ML to determine that based on current outcome).

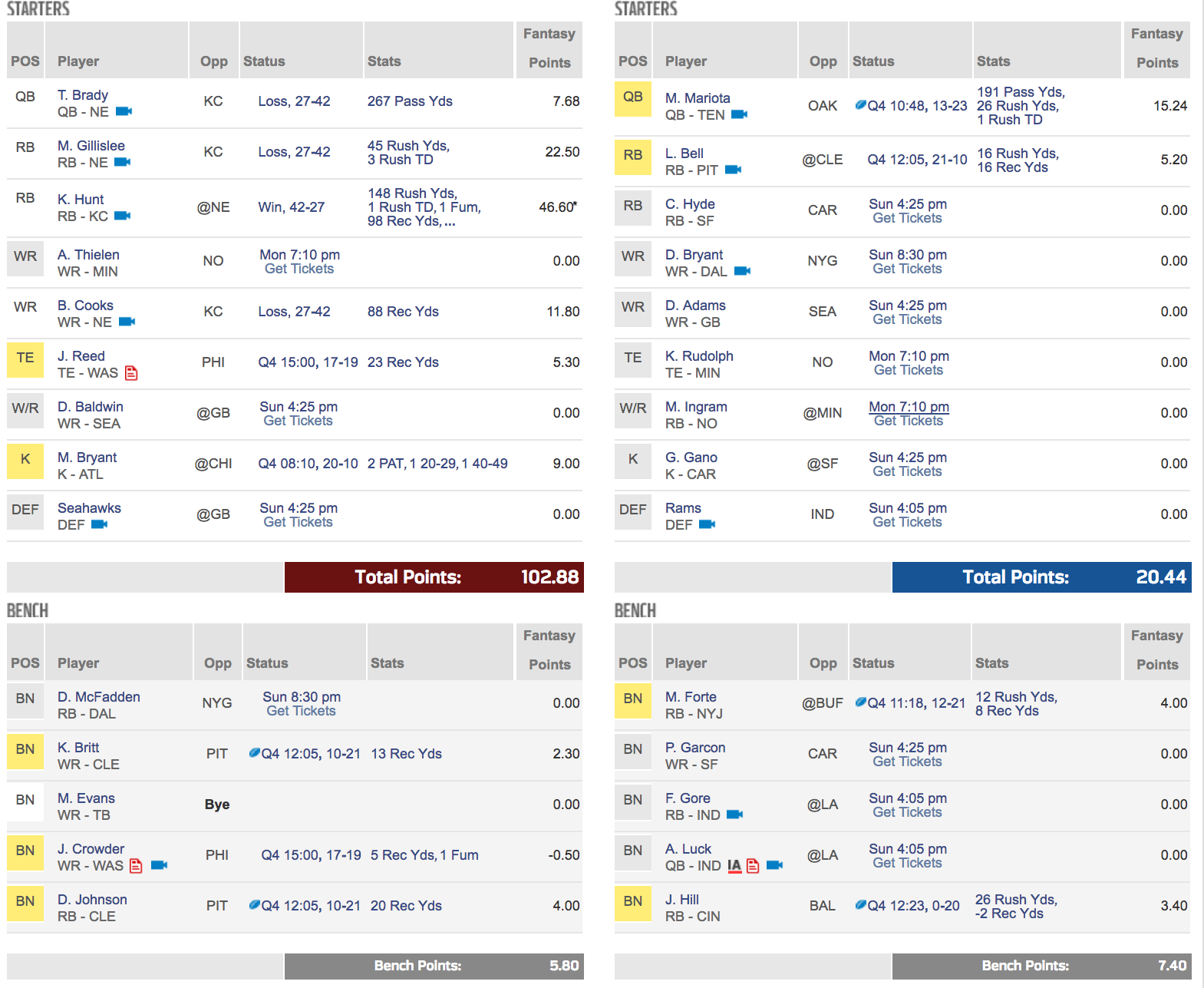

Here is the current status:

Me: 131.71 / Opp: 92.44

My bench only totaled 8.90 / Opp bench: 30.30

I never count my chickens before they are hatched though; I have A. Thielen left; she has M. Ingram and K. Rudolph; If my player scores 0 (which I am not projecting he will), she would need ~40 points to beat me. And crazier outliers have occurred. I knew K. Hunt would be stellar but not THAT stellar.

never count my chickens before they are hatched though; I have A. Thielen left; she has M. Ingram and K. Rudolph; If my player scores 0 (which I am not projecting he will), she would need ~40 points to beat me. And crazier outliers have occurred. I knew K. Hunt would be stellar but not THAT stellar.

But I think I am most proud of my bench. Yes Evans was a forced bench due to unforeseen Bye Week and McFadden was only to fill some Elliott holes when it was expected he would not suit up. But two years ago, I was known for having more points on my bench than in my Starting Lineup. 🙂 If Year 4 of my ML approach turns out as successful as Year 2 and 3, then I might share how I do it algorithmically. Trust me, it takes a lot of patience and training time to get this right.

Week 1.P1 @NFLFantasy PPR Play/Bench Using #MachineLearning

Tardy for this party…So sorry 🙂

We are 2 hours post Sunday kick off; Thursday night came and went without my pre-blogging. This is new for me; I’ll get better. With that, here is who I played on Thursday along with my full team. This will give you a sense of play/bench choices I had.

As I mentioned, I bombed my draft. Out of the 3 leagues I drafted (1 PPR and 2 Standard), the league against my family / the one that started it all, received a grade C with a 61% likelihood of making it to the playoffs, ending the season ranked 5th (out of 10). To give perspective, last year, I was graded a B- going into Week 1, but had a likelihood of 81% of making the playoffs, ranked #2 in terms of wins and losses (11 wins, 3 losses –> and this was exactly how I ended the 2016 season) . My other two leagues graded better; both ranked as draft grade B with league 2 showing a 77% likelihood of making the playoffs, ranked #1 spot BUT with 7 wins and 7 losses (ouch). League 3 shows a playoff likelihood of 67%, ranking 3rd (8 wins, 6 losses).

All in all, I troll the waiver wire heavily and think my model has an uncanny accuracy at finding this year’s rookie-of-the-year as I mentioned in my prior post.

Going into Thursday, League 1 lineup and points scored as of 3:27pm on Sunday against my competitor. Keep in mind, as of Thursday morning, Week 1 was projected by NFL.com to go to the other person (not me), and she had gained 10 projected points between Monday and Thursday. But NFL.com projections are often incorrect. Also, my Dolphins and TB players were unexpectedly benched due to the Hurricane so that was another event previously unmodeled. Also, with Ezequiel Elliott playing in Week 1, my temporary backup (McFadden) was another forced bench. But here is who I went with:

So far, in the RB position, we nailed it (we being me and my model). Both games were played on Thursday but both players exceeded publicly available projections by a landslide. Plus of course my competitor had L. Bell, who (as of Q4) performed way under public projections. But my model flagged him as underperforming bumping up my likelihood to win this week. I also did a good job working with my subpar drafted team in terms of bench: most significantly, J. Crowder. When I updated the model, his name was flagged for underperformance and moved to bench. I had Duke Johnson in but swapped for Gillislee despite all the mixed feedback and noise online about him.

J. Reed (TE) came in 2 points under what I expected but there is still time on the clock. And my competitor has a lot of stout players on her roster to player. Hopefully D. Baldwin and A. Thielen bring home something good – My poor Seahawks defense was an emotional draft (sort of a hometown for me). Just like T Brady was the worst QB for me because I have a visceral response to his game play style evident by the points above. Hopefully he will perform at par with what everyone expects from him.

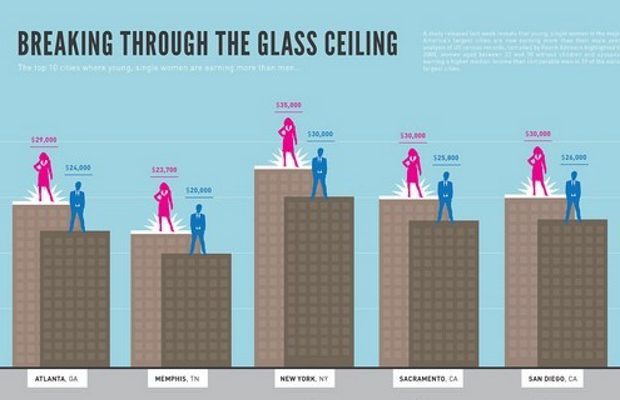

Win @NFLFantasy PPR Leagues w/ ML

So, the past 3 years I have been using #machine-learning (ML) to help me in my family based PPR #fantasy-football league. When I joined the league, the commissioner and my partner’s father, said I would never win using statistics as the basis of my game play. Being cut from the “I’ll show you” aka “well, fine…I’ll prove it to you” cloth that some of us gals working in the tech industry dawn as we break down stereotypical walls and glass ceilings, is something I’ve always enjoyed about my career and love that there is an infographic to tell the tale courtesy of mscareergirl.com (only complaint is the source text in white is basically impossible to read but at least the iconography and salaries are legible):

infographic provided by Danyel Surrency Jones on mscareergirl.com

I’ve never whined that in my industry, I tend to work primarily with the male species or that they are “apparently” paid more *on average* because some survey says so. My work ethic doesn’t ride on gender lines — This train departs from the “proven value based on achievements earned & result in commensurate remuneration” station (woah, that’s a mouthful). I take challenges head-on not to prove to others, but to prove to myself, that I can do something I set my sights on, and do that something as well, if not better, than counterparts. Period. Regardless of gender. And that track has led to the figurative money ‘line’ (or perhaps it’s literal in the case of DFS or trains – who’s to say? But I digress…more on that later).

So, I joined his league on NFL.com, so aptly nicknamed SassyDataMinxes (not sure who the other minxes are in my 1 woman “crew” but I never said I was grammarian; mathematician, aka number ninja, well, yes; but lingualist, maybe not.

Year 1, as to be expected *or with hindsight*, was an abysmal failure. Keep in mind that I knew almost absolute nothing when it came to Pro Football or Fantasy sports. I certainly did not know players or strategies or that fantasy football extended beyond Yahoo Pick ‘Em leagues, which again, in hindsight, would have been a great place to start my learning before jumping head 1st into the world of PPR/DFS.

At its core, it requires you pick the weekly winning team from 2 different competitors and assuming you have the most correct picks, you win that week. If there are no teams on BYE that week, you have 32 teams or 16 games to “predict outcomes” ; a binary 0 or 1 for lose or win in essence. Right? Never, she exclaims, because WAIT, THERE’S MORE: you have to pick a winner based on another factor: point spread. Therefore, if 0 means lost and 1 means won, you get a 1 per win EXCEPT if the spread of points is less than what the “book makers” out of Vegas determine to be the “winning spread” – You could technically pick the winning team and still get a goose egg for that matchup if the team did not meet their point spread (ooh, it burns when that happens). The team that wins happily prancing around the field singing “We are the Champions” while you are the loser for not betting against them because they were comfortable winning by a paltry lesser amount than necessary – Ooh, the blood boils relieving those early games – especially since my Grandmother who won that year picked her teams based on cities she liked or jersey colors that her ‘Color Me Happy’ wheel said were HER best COOL tones; the most unscientific approach worked for her so many times I now think she actually IS A bookie running an illegal operation out of her basement, which fronts as ‘her knitting circle” – Yah, as if any of us believe that one, Grandma :)! (She is a walking football prediction algorithm).

So, something as seemingly simple as Yahoo Pick ‘Em can actually be harder than it appears unless you are her. But, still…markedly easier than a PPR league; and light years easier than DFS/Auction style fantasy leagues when it comes to predicting gaming outcomes at the player, weekly matchup and league perspectives.

Hindsight is such a beautiful thing (*I think I have said that before*) because to espouse all of these nuggets of knowledge as though I am the Alliteration Arbiter of All –> The Socratic Seer of Scoring Strategies…And again, as always I digress (but, ain’t it fun!).

OK, let’s continue…So, we’ve established that fantasy football gaming outcomes requires a lot of *something* — And we’ve established that just cuz it seems simple, or did, when trying to predict outcomes along a massively mutable set of variables *wait, why didn’t I just READ that sentence or THINK it when I started! If I could go back in time and ask my 3 year younger:

“Self, should I stop this nonsense now, alter the destination or persevere through what, at times, might seems like a terrible journey? *HUM, I think most pensively*.

And then answer myself, just like the good only-child I am:

“NEVER – Self, nothing worth getting is easy to get, but the hardest fought wins are the most worthwhile when all is said and done and remember, don’t let the bedbugs bite; YOU’RE bigger than them/that.”

Or something along those lines, perhaps…

NEVER was my answer because in 2015 and 2016 (Years 2 and 3), I was #1 in the league and won those coveted NFL.com trophies and a small pool of money. But what I won most of all was bragging rites.

Oh baby, you can’t buy those…

Not even on the Dark Web from some Onion-Routed Darker Market. Especially the right to remind a certain commissioner / neighsayer du jour / father-in-law-like that my hypothesis of using ML & statistics ALONE could beat his years of institutional football knowledge and know-how. I also won a 2nd NFL-managed league that I joined in Year 3 to evaluate my own results with a different player composition.

So Year 1 was a learning year, a failure to others in the league but super valuable to me. Year 2 was my 1st real attempt to use the model, though with much supervision and human “tweaking” ; Year 3, non-family league (league #2 for brevity sake; snarky voice in head “missed that 4 paragraphs ago” – Burn!), I had drafted an ideal team, rated A- .

Year 3 being a double test to ensure Year 1 wasn’t a double fluke. Two leagues played:

League #1 with the family, was a team comprised of many non-ideal draft picks chosen during non-optimized rounds (QB in round 2, DEF in round 4 etc). But the key in both Year 2 and 3, was spotting the diamonds in the rookie rough – my model bubbled up unknown players or as they are known to enthusiasts: “deep sleepers” that went on to become rookie-of-the-year type players: in 2015, that was Devonta Freeman (ATL); in 2016, that was ‘Ty-superfreak’ Hill aka Tyreek Hill (Chiefs) and even better, Travis Kelce (who had been on my roster since 2015 but rose to the occasion in 2016, BEEEG-TIME) . That has always been a strength of my approach to solving this outcomes conundrum.

So, that all being said, in this Year 4, I plan to blog PRE-GAME with my predictions for my team with commentary on some of the rankings of other players. Remember, it isnt just a player outcome, but it is player outcome in relation to your matchup that week within your league and in the context of who best to play vs. bench given those weekly changing facets. Some weeks, you might look like a boss according to NFL.com predictions; but in fact, should be playing someone else who might have a lower-than-you-are-comfortable-putting-into-your-lineup prediction. Those predictions folks HAVE ISSUES – But I believe in the power of model evaluation and learning, hence the name Machine Learning or better yet, Deep Learning approaches.

Side note: reminds me of that “SAP powered” player comparison tool:

which was DOWN / not accessible most of the aforementioned season when it was being hammered on by fans in need of a fantasy fix (reminds me of an IBM Watson joke but I was keep that one to myself ) – whomever is at fault – you should make sure your cloud provider “models” out an appropriate growth-based capacity & utilization plan IF you are going to feature it on your fantasy football site, NFL.com.

which was DOWN / not accessible most of the aforementioned season when it was being hammered on by fans in need of a fantasy fix (reminds me of an IBM Watson joke but I was keep that one to myself ) – whomever is at fault – you should make sure your cloud provider “models” out an appropriate growth-based capacity & utilization plan IF you are going to feature it on your fantasy football site, NFL.com.

Next posting will be all about how I failed during Year 4’s draft (2017) and what I am planning to do to make up for it using the nuggets of knowledge that is an offshoot of retraining the MODEL(s) during the week – Plus, I will blog my play/bench predictions which will hopefully secure a week 1 win (hopefully because I still need to retrain this week but not until Wednesday :)).

In a separate post, we’ll talk through the train…train…train phases, which datasets are most important to differentiate statistically important features from the sea of unworthy options sitting out waiting for you to pluck them into your world. But dont fall prey to those sinister foe…They might just be the “predictable” pattern of noise that clouds one’s senses. And of course, scripting and more scripting; so many lines of code were written and rewritten covering the gamut of scripting languages from the OSS data science branch (no neg from my perspective on SaS or SPSS other than they cost $$$ and I was trained on R in college *for free* like most of my peers) – well, free is a relative term, and you take the good with the bad when you pull up your OSS work-boots –> R has its drawbacks when it comes to the viability of processing larger than life datasets without herculean sampling efforts just to be able to successfully execute a .R web scraping script without hitting the proverbial out of memory errors, or actually train the requisite models that are needed to solve said self-imposed ML fantasy football challenges such as this. Reader thinks to oneself, “she sure loves those tongue twisting alliterations.”

And gals, I love helping out a fellow chica (you too boys/men, but you already know that, eh) — Nobody puts baby in the corner, and I never turn my back on a mind in need or a good neg/dare.

Well, Year 4 — Happy Fantasy Football Everyone — May the wind take you through the playoffs and your scores take you all the way to the FF Superbowl 🙂

Eye Tracking & Applied ML: Soapbox Validations

Anyone who has read my blog (shameless self-plug: http://www.lauraedell.com) over the past 12 years will know, I am very passionate about drinking my own analytical cool-aid. Whether during my stints as a Programmer, BI Developer, BI Manager, Practice Lead / Consultant or Senior Data Scientist, I believe wholeheartedly in measuring my own success with advanced analytics. Even my fantasy football success (more on that in a later post) can be attributed to Advanced Machine Learning…But you wouldn’t believe how often this type of measurement gets ignored.

![]()

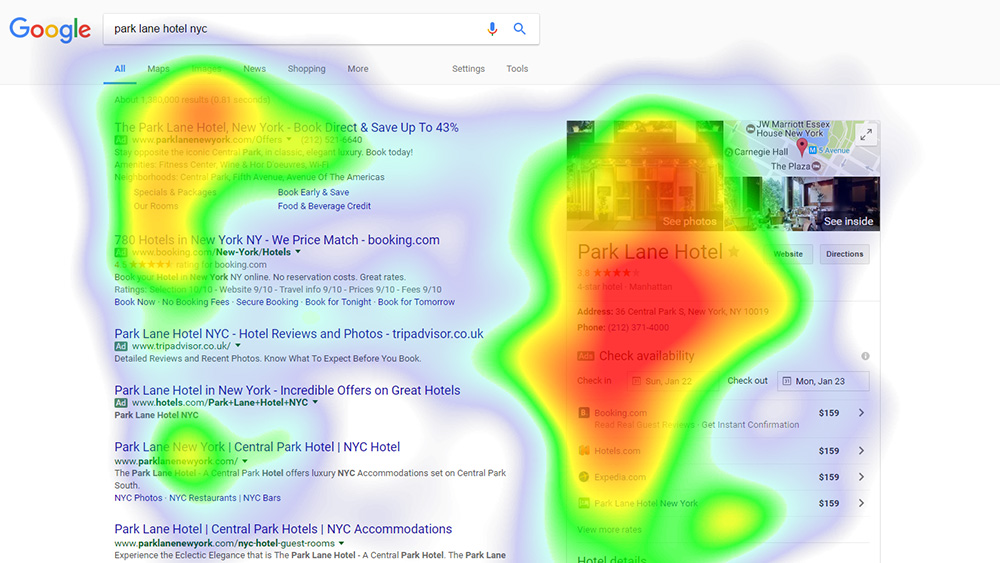

Introducing you, dear reader, to my friend “Eye-Tracker” (ET). Daunting little set of machines in that image, right?! But ET is a bonafide bada$$ in the world of measurement systems; oh yeah, and ET isn’t a new tech trend – in fact, mainstream ET systems are a staple of any PR, marketing or web designers’ tool arsenal as a stick to measure program efficacy between user intended behavior & actual outcomes/actions.

In my early 20’s, I had my own ET experience & have been a passionate advocate since, having witnessed what happens when you compound user inexperience with poorly designed search / e-commerce operator sites. I was lucky enough to work for the now uber online travel company who shall go nameless (okay, here is a hint: remember a little ditty that ended with some hillbilly singing “dot commmm” & you will know to whom I refer). This company believed so wholeheartedly in the user experience that they allowed me, young ingénue of the workplace, to spend thousands on eye tracking studies against a series of balanced scorecards that I was developing for the senior leadership team. This is important because you can ASK someone whether a designed visualization is WHAT THEY WERE THINKING or WANTING, even if built iteratively with the requestor. Why, you ponder to yourself, would this be necessary when I can just ask/survey my customers about their online experiences with my company and saved beaucorp $$.

Well, here’s why: 9x out of 10, survey participants, in not wanting to offend, will nod ‘yes’ instead of being honest, employing conflict avoidance at its best. Note, this applies to most, but I can think of a few in my new role who are probably reading this and shaking their head in disagreement at this very moment.

Eye tracking studies are used to measure efficacy by tracking what content areas engage users’ brains vs. areas that fall flat, are lackluster, overdesigned &/or contribute to eye/brain fatigue. It measures this by “tracking” where & for how long your eyes dwell on a quadrant (aka visual / website content / widget on a dashboard) and by recording the path & movement of the eyes between different quadrants’ on a page. It’s amazing to watch these advanced, algorithmic-tuned systems, pick up even the smallest flick of one’s eyes, whether darting to or away from the “above-fold” content, in ‘near’ real-time. The intended audience being measured generates the validation statistics necessary to evaluate how well your model fit the data. In the real-world, receiving attaboys or “ya done a good job” high fives should be doled out only after validating efficacy: eg. if customers dwell time increases, you can determine randomness vs. intended actual; otherwise, go back to the proverbial drawing board until earn that ‘Atta boy’ outright.

What I also learned which seems a no-brainer now; people read from Left Top to Right Bottom (LURB). So, when I see anything that doesn’t at LEAST follow those two simple principles, I just shake my head and tisk tisk tisk, wondering if human evolution is shifting with our digital transformation journey or are we destined to be bucketed with the “that’s interesting to view once” crowd instead of raising to the levels of usefulness it was designed for.

Come on now, how hard is it to remember to stick the most important info in that top left quadrant and the least important in the bottom right, especially when creating visualizations for use in the corporate workplace by senior execs. They have even less time & attention these days to focus on even the most relevant KPIs, those they need to monitor to run their business & will get asked to update the CEO on each QTR, with all those fun distractions that come with the latest vernacular du-jour taking up all their brain space: “give me MACHINE LEARNING or give me death; the upstart that replaced mobile/cloud/big data/business intelligence (you fill in the blank).

But for so long, it was me against the hard reality that no one knew what I was blabbing on about, nor would they give me carte blanche to re-run those studies ever again , And lo and behold, my Laura-ism soapbox has now been vetted, in fact, quantified by a prestigious University professor from Carnegie, all possible because a little know hero named Edmond Huey, now near and dear to my heart, grandfather of the heatmap, followed up his color-friendly block chart by building the first device capable of tracking eye movements while people were reading. This breakthrough initiated a revolution for scientists but it was intrusive and readers had to wear special lenses with a tiny opening and a pointer attached to it like the 1st image pictured above.

Fast forward 100 years…combine all ingredients into the cauldron of innovation & technological advancement, sprinkled with my favorite algorithmic pals: CNN & LSTM & voila! You have just baked yourself a popular visualization known as a heat/tree map (with identifiable info redacted) :

This common visual is akin to eye tracking analytics which you will see exemplified in the last example below. Cool history lesson, right?

This common visual is akin to eye tracking analytics which you will see exemplified in the last example below. Cool history lesson, right?

Even cooler is this example from a travel website ‘Travel Tripper’ which published Google eye-tracking results specific to the hotel industry. Instead of a treemap that you might be used to (akin to a Tableau or other BI tool visualization OOTB), you get the same coordinates laid out over search results in this example; imagine having your website underneath and instead of guessing what content should be above or below the fold, in the top left or right of the page, you can use these tried and true eye tracking methods to quantify exactly what content items customers or users are attracted to 1st and where their eyes “dwell” the longest on the page (red hot).

So, for those non-believers, I say, become a web analytic trendsetter, driving the future of machine design forward (ala “Web Analytics 3.0”).

Be a future-thinker, forward mover, innovator of your data science sphere of influence, always curious yet informed to make intelligent choices.

Microsoft Data AMP 2017

Data AMP 2017 just finished and some really interesting announcements came out specific to our company-wide push into infusing machine learning, cognitive and deep learning APIs into every part of our organization. Some of the announcements are ML enablers while others are direct enhancements.

Here is a summary with links to further information:

- SQL Server R Services in SQL Server 2017 is renamed to Machine Learning Services since both R and Python will be supported. More info

- Three new features for Cognitive Services are now Generally Available (GA): Face API, Content Moderator, Computer Vision API. More info

- Microsoft R Server 9.1 released: Real time scoring and performance enhancements, Microsoft ML libraries for Linux, Hadoop/Spark and Teradata. More info

- Azure Analysis Services is now Generally Available (GA). More info

- **Microsoft has incorporated the technology that sits behind the Cognitive Services inside U-SQL directly as functions. U-SQL is part of Azure Data Lake Analytics(ADLA)

- More Cortana Intelligence solution templates: Demand forecasting, Personalized offers, Quality assurance. More info

- A new database migration service will help you migrate existing on-premises SQL Server, Oracle, and MySQL databases to Azure SQL Database or SQL Server on Azure virtual machines. Sign up for limited preview

- A new Azure SQL Database offering, currently being called Azure SQL Managed Instance (final name to be determined):

- Migrate SQL Server to SQL as a Service with no changes

- Support SQL Agent, 3-part names, DBMail, CDC, Service Broker

- **Cross-database + cross-instance querying

- **Extensibility: CLR + R Services

- SQL profiler, additional DMVs support, Xevents

- Native back-up restore, log shipping, transaction replication

- More info

- Sign up for limited preview

- SQL Server vNext CTP 2.0 is now available and the product will be officially called SQL Server 2017:

Those I am most excited about I added ** next to.

This includes key innovations with our approach to AI and enhancing our deep learning compete against Google TensorFlow for example. Check out the following blog posting: https://blogs.technet.microsoft.com/dataplatforminsider/2017/04/19/delivering-ai-with-data-the-next-generation-of-microsofts-data-platform/ :

- The first is the close integration of AI functions into databases, data lakes, and the cloud to simplify the deployment of intelligent applications.

- The second is the use of AI within our services to enhance performance and data security.

- The third is flexibility—the flexibility for developers to compose multiple cloud services into various design patterns for AI, and the flexibility to leverage Windows, Linux, Python, R, Spark, Hadoop, and other open source tools in building such systems.

Wonderful World of Sports: Hey NFL, Got RFID?

As requested by some of my LinkedIn followers, here is the NFL Infographic about RFID tags I shared a while back:

I hope @NFL @XboxOne #rfid data becomes more easily accessible. I have been tweeting about the Zebra deal for 6 months now, and the awesome implications this would have on everything from sports betting to fantasy enthusiasts to coaching, drafting and what have you. Similarly, I have built a fantasy football (PPR) league bench/play #MachineLearning model using #PySpark which, as it turns out, is pretty good. But it could be great with the RFID stream.

This is where the #IoT rubber really hits the road because there are so many more fans of the NFL than there are folks who really grok the “Connected Home” (not knocking it, but it doesn’t have the reach tentacles of the NFL). Imagine measuring the burn-rate output vs. performance degradation of these athletes mid game and one day, being able to stream that on the field or booth for game course corrections. Aah, a girl can only dream…

Is Machine Learning the New EPM Black?

I am currently a data scientist & am also a certified lean six sigma black belt. I specialize in the Big Data Finance, EPM, BI & process improvement fields where this convergence of skills has provided me the ability to understand the interactions between people, process and technology/ tools.

I would like to address the need to transform traditional EPM processes by leveraging more machine learning to help reduce forecast error and eliminate unnecessary budgeting and planning rework and cycle time using a 3 step ML approach:

1st, determine which business drivers are statistically meaningful to the forecast (correlation) , eliminating those that are not.

2nd, cluster those correlated drivers by significance to determine those that cause the most variability to the forecast (causation).

3rd, use the output of 1 and 2 as inputs to the forecast, and apply ML in order to generate a statistically accurate forward looking forecast.

Objection handling, in my experience, focuses on the cost, time and the sensitive change management aspect- how I have handled these, for example, is as such :

- Cost: all of these models can be built using free tools like R and Python data science libraries, so there is minimal to no technology/tool capEx/opEx investment.

- Time: most college grads with either a business, science or computer engineering degree will have undoubtedly worked with R and/or Python (and more) while earning their degree. This reduces the ramp time to get folks acclimated and up to speed. To fill the remaining skill set gap, they can use the vast libraries of work already provided by the R / Python initiatives or the many other data science communities available online for free as a starting point, which also minimizes the time due to unnecessary cycles and rework trying to define drivers based on gut feel only.

- Change: this is the bigger objection that has to be handled according to the business culture and openness to change. Best means of handling this is to simply show them. Proof is in the proverbial pudding so creating a variance analysis of the ML forecast, the human forecast and the actuals will speak volumes, and bonus points if the correlation and clustering analysis also surfaced previously unknown nuggets of information richness.

Even without the finding the golden nugget ticket, the CFO will certainly take notice of a more accurate forecast and appreciate the time and frustration savings from a less consuming budget and planning cycle.

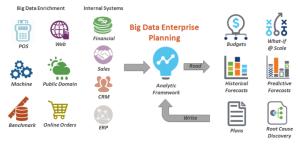

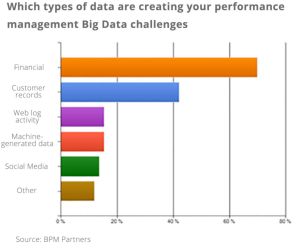

Utilizing #PredictiveAnalytics & #BigData To Improve Accuracy of #EPM Forecasting Process

I was amazed when I read the @TidemarkEPM awesome new white paper on the “4 Steps to a Big Data Finance Strategy.” This is an area I am very passionate about; some might say, it’s become my soap-box since my days as a Business Intelligence consultant. I saw the dawn of a world where EPM, specifically, the planning and budgeting process was elevated from gut feel analytics to embracing #machinelearning as a means of understanding which drivers are statistically significant from those that have no verifiable impact , and ultimately using those to feed a more accurate forecast model.

Traditionally (even still today), finance teams sit in a conference room with Excel spreadsheets from Marketing, Customer Service etc., and basically, define the current or future plans based on past performance mixed with a sprinkle of gut feel (sometimes, it was more like a gallon of gut feel to every tablespoon of historical data). In these same meetings just one quarter later, I would shake my head when the same people questioned why they missed their targets or achieved a variance that was greater/less than the anticipated or expected value.

The new world order of Big Data Finance leverages the power of machine learned algorithms to derive true forecasted analytics. And this was a primary driver for my switching from a pure BI focus into data science. And, I have seen so many companies embrace the power of true “advanced predictive analytics” and by doing so, harness the value and benefits of doing so; and doing so, with confidence, instead of fear of this unknown statistical realm, not to mention all of the unsettled glances when you say the nebulous “#BigData” or “#predictiveAnalytics” phrases.

But I wondered, exactly how many companies are doing this vs. the old way? And I was very surprised to learn from the white-paper that 22.7% of people view predictive capabilities as “essential” to forecasting, with 52.2% claiming it nice to have. Surprised is an understatement; in fact, I was floored.

how many companies are doing this vs. the old way? And I was very surprised to learn from the white-paper that 22.7% of people view predictive capabilities as “essential” to forecasting, with 52.2% claiming it nice to have. Surprised is an understatement; in fact, I was floored.

We aren’t just talking about including weather data when predicting consumer buying behaviors. What about the major challenge for the telecommunications / network provider with customer churn? Wouldn’t it be nice to answer the question: Who are the most profitable customers WHO have the highest likelihood of churn? And wouldn’t it be nice to not have to assign 1 to several analysts xx number of days or weeks to be able to crunch through all of the relevant data to try to get to an answer to that question? And still probably not have all of the most important internal indicators or be including indicators that have no value or significance to driving an accurate churn outcome?

What about adding in 3rd party external benchmarking data to further classify and correlate these customer indicators before you run your churn prediction model? To manually do this is daunting and so many companies, I now hypothesize, revert to the old ways of doing the forecast. Plus, I bet they have a daunting impression of the cost of big data and the time to implement because of past experiences with things like building the uber “data warehouse” to get to that panacea of the “1 single source of truth”…On the island of Dr. Disparate Data that we all dreamt of in our past lives, right?

I mean we have all heard that before and yet, how many times was it actually done successfully, within budget or in the allocated time frame? And if it was, what kind of quantifiable return on investment did you really get before annual maintenance bills flowed in? Be honest…No one is judging you; well, that is, if you learned from your mistakes or can admit that your pet project perhaps bit off too much and failed.

And what about training your people or the company to utilize said investment as part of your implementation plan? What was your budget for this training and was it successful, or did you have to hire outside folks like consultants to do the work for you? And by doing so, how long did it actually take the break the dependency on those external resources and still be successful?

Before the days of Apache Spark and other Open Source in-memory or streaming technologies, the world of Big Data was just blossoming into what it was going to grow into as a more mature flower. On top of which, it takes a while for someone to fully grok a new technology, even with the most specialized training, especially if they aren’t organically a programmer, like many Business Intelligence implementation specialists were/are. That is because those who have past experience with something like C++, can quickly apply the same techniques to newer technologies like Scala for Apache Spark or Python and be up and running much faster vs. someone who has no background in programming trying to learn what a loop is or how to call an API to get 3rd party benchmarking data. We programmers take that for granted when applying ourselves to learning something new.

And now that these tools are more enterprise ready and friendly with new integration modules with tools like R or MATLib for the statistical analysis coupled with all of the free training offered by places like University of Berkeley (via eDX online), now is the time to adopt Big Data Finance more than ever.

In a world where the machine learning algorithm can be paired with traditional classification modeling techniques automatically, and said algorithms have been made publicly available for your analysts to use as a starting point or in their entirety for your organization, one no longer needs to be daunted by thought of implementing Big Data Finance or testing out the waters of accuracy to see if you are comfortable with the margin of error between your former forecasting methodology and this new world order.

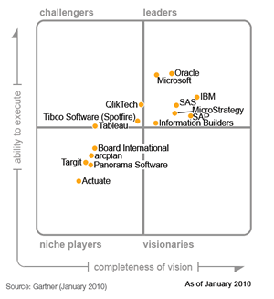

2015 Gartner Magic Quadrant – Boundaries Blur Between BI & Data Science

…a continuing trend which I gladly welcome…

IT WAS the best of times, it was the worst of times, it was the age of wisdom, it was the age of foolishness, it was the epoch of belief, it was the epoch of incredulity, it was the season of Light, it was the season of Darkness, it was the spring of hope, it was the winter of despair, we had everything before us, we had nothing before us…

–Charles Dickens

Truer words were never spoken, whether about the current technological times or deep in our past (remember the good ole enterprise report books, aka the 120 page paper weight?)

And, this data gal couldn’t be happier with the final predictions made by Gartner in their 2015 Magic Quadrant Report for Business Intelligence. Two major trends / differentiators fall right into the sweet spot I adore:

New demands for advanced analytics

Focus on predictive/prescriptive capabilities

Whether you think this spells out doom for business intelligence as it exists today or not, you cannot deny that these trends in data science and big data can only force us to finally work smarter, and not harder (is that even possible??)

What are your thoughts…?

KPIs in Retail & Store Analytics

I like this post. While I added some KPIs to their list, I think it is a good list to get retailers on the right path…

KPIs in Retail and Store Analytics (continuation of a post made by Abhinav on kpisrus.wordpress.com:

A) If it is a classic brick and mortar retailer:Retail / Merchandising KPIs:

-Average Time on Shelf

-Item Staleness

-Shrinkage % (includes things like spoilage, shoplifting/theft and damaged merchandise)

Marketing KPIs:

-Coupon Breakage and Efficacy (which coupons drive desired purchase behavior vs. detract)

-Net Promoter Score (“How likely are you to recommend xx company to a friend or family member” – this is typically measured during customer satisfaction surveys and depending on your organization, it may fall under Customer Ops or Marketing departments in terms of responsibility).

-Number of trips (in person) vs. e-commerce site visits per month (tells you if your website is more effective than your physical store at generating shopping interest)

B) If it is an e-retailer :

Marketing KPIs:

-Shopping Cart Abandonment %

-Page with the Highest Abandonment

-Dwell time per page (indicates interest)

-Clickstream path for purchasers (like Jamie mentioned do they arrive via email, promotion, flash sales source like Groupon), and if so, what are the clickstream paths that they take. This should look like an upside down funnel, where you have the visitors / unique users at the top who enter your site, and then the various paths (pages) they view in route to a purchase).

-Clickstream path for visitors (take Expedia for example…Many people use them as a travel search engine but then jump off the site to buy directly from the travel vendor – understanding this behavior can help you monetize the value of the content you provide as an alternate source of revenue).

-Visit to Buy %

-If direct email marketing is part of your strategy, analyzing click rate is a close second to measuring conversion rate. 2 different KPIs, one the king , the other the queen and both necessary to understand how effective your email campaign was and whether it warranted the associated campaign cost.

Site Operations KPIs / Marketing KPIs:

-Error % Overall

-Error % by Page (this is highly correlated to the Pages that have the Highest Abandonment, which means you can fix something like the reason for the error, and have a direct path to measure the success of the change).

Financial KPIs:

-Average order size per transaction

-Average sales per transaction

-Average number of items per transaction

-Average profit per transaction

-Return on capital invested

-Margin %

-Markup %

I hope this helps. Let me know if you have any questions.

You can reach me at mailto://lauraedell@me.com or you can visit my blog where I have many posts listing out various KPIs by industry and how to best aggregate them for reporting and executive presentation purposes ( http://www.lauraedell.com ).

It was very likely that I would write on KPIs in Retail or Store Analytics since my last post on Marketing and Customer Analytics. The main motive behind retailers looking into BI is ‘customer’ and how they can quickly react to changes in customer demand, rather predict customer demand, remove wasteful spending by target marketing, exceeding customer expectation and hence improve customer retention.

I did a quick research on what companies have been using as a measure of performance in retail industry and compiled a list of KPIs that I would recommend for consideration.

Customer Analytics

Customer being the key for this industry it is important to segment customers especially for strategic campaigns and to develop relationships for maximum customer retention. Understanding customer requirements and dealing with ever-changing market conditions is the key for a retail industry to survive the competition.

- Average order size per transaction

- Average sales per transaction

View original post 278 more words

Awesome Article “Views from the C-Suite: Who’s Big on Big Data” from The Economist

This is an awesome article discussing the whole “big data” thing from the C-level point of view. It is easy to get mired down in the technical weeds of big data, especially since it generates a ton of different definitions depending on who you ask and usually, where they work *department, wise*.

http://pages.platfora.com/rs/platfora/images/Economist-Intelligence-Unit-Big-Data-Exec-Summary.pdf

Let me know what you think.

Big shout out to @platfora for sharing this!

Finance is the Participation Sport of the BI Olympics

IT is no longer the powerhouse that it once was, and unfortunately for CIOs who haven’t embraced change, much of their realm was commoditized by cloud computing powered by the core principles of grid computational engines and schema-less database designs. The whole concept of spending millions of dollars to bring all disparate systems together into one data warehouse has proven modesty beneficial but if we are being truly honest, what has all that money and time actually yielded, especially towards the bottom line?

And by the time you finished with the EDW, I guarantee it was missing core operational data streams that were then designed into their own sea of data marts. Fast forward a few years, and you probably have some level of EDW, many more data marts , probably one or more cube (ROLAP/MOLAP) applications and n-number of cubes or a massive 1+ hyper-cube(s) and still, the business depends of spreadsheets to sit on top of these systems, creating individual silos of information under the desk or in the mind of one individual.

Wait<<<rewind<<< Isn’t that where we started?

Having disparate, ungoverned and untrusted data sources being managed by individuals instead of by enterprise systems of record?

And now we’re back>>>press play to continue>>>

When you stop to think about the last ten years, fellow BI practitioners, you might be scared of your ever-changing role. From a grass-roots effort to a formalized department team, Business Intelligence went from the shadows to the mainstream, and brought with it reports then dashboards, then KPIs and scorecards, managing by exception, proactive notifications and so on. And bam! We were hit by the first smattering of changes to come when Hadoop and others hit the presses. But we really didnt grok what the true potential and actual meaning of said systems unless you come from a background like myself, either competitively, or from a big data friendly industry group like telecommunications, or from a consultant/implementation p.o.v.

And then social networking took off like gang busters and mobile became a reality with the introduction of the tablet device (though, I hate to float my boat as always by mentioning my soap box dream spewed at a TDWI conference about the future of mobile BI when the 1st generation iPhone released).

But that is neither here nor there. And, as always, I digress and am back…

At the same time as we myopically focused on the technological changing landscape around us, a shifting power paradigm was building wherein the Finance organization, once relegated to the back partition of cubicles, where a pin drop was heard ’round the world (or at least, the floor), was growing more and more despondent with not being able to access the data they needed without IT intervention in order to update their monthly forecasts and produce their subsequent P&L, Balance Sheet and Cash Flow Planning statements. And IT’s response was to acquire (for additional millions of dollars) a “BI tool” aka an ad-hoc reporting application that would allow them to pull their own data. But it had been installed and the data had been pulled, and validated and by the time of completion, the Finance team had either found an alternate solution or found the system useful for a very small sliver of analysis but went outside of IT to get additional sources of information that wanted and needed to adapt to the changing business pressures from the convergence of social, mobile and unstructured datasets. And suddenly those once, shiny BI tools, seemed like antiquated relics, and simply could not handle the sheer data volumes that were now expected from it or would crash (unless filtered beyond the point of value). Businesses need not adapt their queries to the tool but need a tool that can adapt to their ever-changing processes and needed.

So if necessity if the mother of invention, Finance was its well deserving child. And why? The business across the board is starving for information but drowning in data. And Finance is no longer a game of solitaire, understood by few and ignored by many. In fact, Finance has become the participation sport of the BI Olympics, and rightfully so, where departmental collaboration at the fringe of the organization has proven as the missing link that before prevented successful top-down planning efforts. Where visualizations demands made dashboards a thing of the past, and demanded and better story, vis-a-vie storylines / infographics, to help disseminate more than just the numbers, but the story behind the numbers to the rest of the organization, or what I like to call the “fringe”.

I remember a few years ago when the biggest challenge was getting data, and often, we joked about how nice it would be to have a sea of data to drown in; an analysts’ buffet-du-jour; a happy paralysis-induced-by said analysis plate was the special of the day, yet only for a few, while the rest was but a gleam in our data-starved eyes.

Looking forward from there, I ask, dear reader, where do we go from here…If it’s a Finance party and we are all invited, what do we bring to the party table as BI practitioners of value? Can we provide the next critical differentiator?

Well, I believe that we can, and that critical differentiator is forward-looking data. Why?

Gartner Group stated that “Predictive data will increase profitability by 20% and that historical data will become a thing of the past” (for a BI practitioner, the last part of that statement should worry you, if you are still resisting the plunge into the predictive analytics pool).

Remember, predictive is a process that allows an organization to get true insight and has been executed amongst a larger group of people to drive faster, smarter business users. This is perfect for enterprise needs because by definition, they offer a larger group of people to work with.

In fact, it was Jack Welch would said “An organization’s ability to learn, and translate that learning into action rapidly, is the ultimate competitive advantage”

In fact, it was Jack Welch would said “An organization’s ability to learn, and translate that learning into action rapidly, is the ultimate competitive advantage”

If you haven’t already, go out and started learning one of the statistical application packages. I suggest “R” and in the coming weeks, I will provide R and SAS scripts (I have experience with both) for those interested in growing their chosen profession and remaining relevant as we weather the sea of business changes

.

How Do You Use LinkedIn? (Social Media Infographics)

How often do you refresh your LinkedIn profile pic? Or worse, the content within your profile? Unless you are a sales exec trolling the social networking site or a job seeker, I would surmise not that often; in fact, rarely is most apropos of a description. Thoughts…? ( yes, she’s back ( again), but this time, for good dear readers…@Laura_E_Edell (#infographics) says thanks to designinfographics.com for her latest content postings!

And just because I call it out, doesn’t mean you will know the best approach to updating your LinkedIn profile. And guess what …there’s an infographic for that! (http://www.linkedin.com/in/lauraerinedell)

Futures According to Laura… Convergence of Cloud and Neural Networking with Mobility and Big Data

It’s been longer and longer between my posts and as always, life can be inferred as the reason for my delay.